Linux is one of the most complex collaborative projects in human history. We're talking about a codebase exceeding 40 million lines, fueled by over 2,000 contributors per release cycle. As Technical Leaders, I keep a close watch on how the kernel community manages this chaos. If a process works here, it's robust enough for any enterprise engineering team.

Back in January of this year, the Linux project quietly codified something that every CTO and Engineering Manager should revisit now that the initial AI hype cycle has cooled: The official regulations for AI Coding Assistants in Kernel Development.

Three months on, this document remains the gold standard for balancing velocity with quality and legal integrity. The core principle is uncompromising: AI is a tool to help, not the author.

The established rules are a masterclass in risk management:

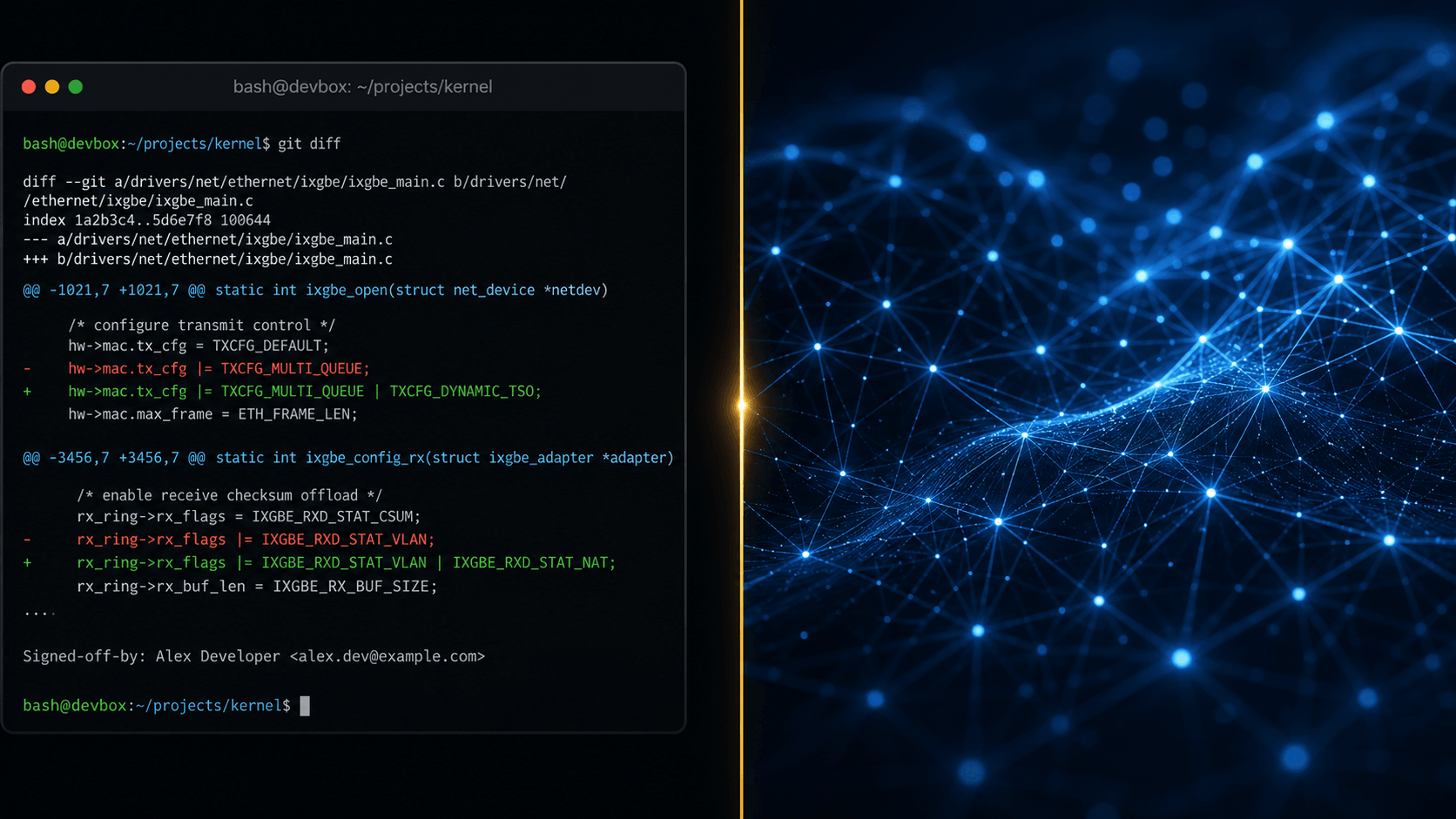

- Sole Human Accountability: AI agents cannot sign off on code. The Signed-off-by tag is a legal certification, and only a human developer can own the review, the license compliance, and the bug.

- Transparent Attribution: If a model assisted in generating a patch, it requires an Assisted-by: tag with the specific model version. This isn't about shaming AI use; it's about traceability and debugging.

- No Special Treatment: AI-generated code is not excused from the legendary (and brutal) Linux Kernel Mailing List review process. The bar for quality remains the same.

The conversation has shifted from "Should we use AI?" to "How do we own what AI produces?".